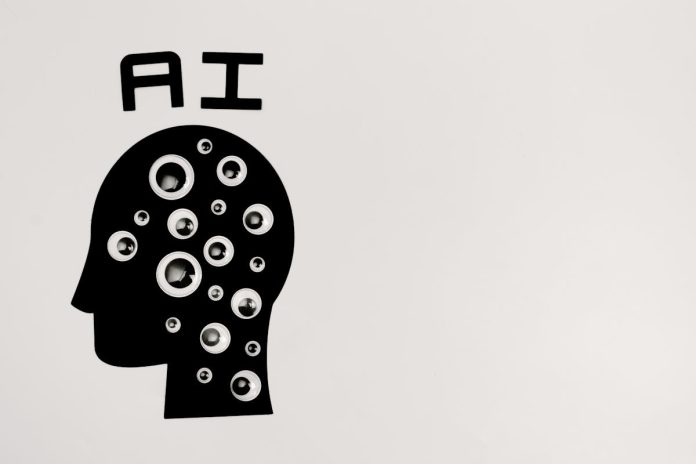

The rapid advancement of artificial intelligence has propelled us into an era where the lines between organic and synthesized interaction are increasingly blurred. From sophisticated chatbots to virtual companions like Replika and Character.AI, these digital entities are now capable of simulating empathy, offering comfort, and engaging in conversations that feel remarkably personal.

This technological leap inevitably provokes a profound question: Can AI be a girlfriend? While the immediate answer might seem to hinge on definitions of consciousness or sentience, a deeper examination reveals that the question itself is saturated with societal expectations and ethical complexities.

These AI companions are not merely sophisticated algorithms; they are often meticulously designed to fulfill emotional and social needs, frequently mimicking, and thereby reinforcing, traditionally feminine roles of nurturer, listener, and comforter.

This essay will explore the ethical implications of such design, scrutinize the potential for these technologies to deepen loneliness rather than alleviate it, and analyze how these AI interactions reflect and perhaps reinforce problematic societal expectations about gender, emotional labor, and the fundamental nature of human connection.

The allure of the AI companion lies in its promise: an always-available, non-judgmental confidante, an attentive listener, and a perpetually understanding presence. In an increasingly isolated world, where genuine human connection can be challenging to forge and maintain, these virtual entities offer a seductive alternative.

Users are drawn to their seemingly perfect understanding, their capacity to remember details (or simulate memory), and their unwavering support, all delivered without the complexities, demands, or potential for conflict inherent in human relationships.

For individuals struggling with social anxiety, loneliness, or a lack of emotional support, an AI companion can initially feel like a harmless escape, a convenient therapist, or even a lifeline. The sophisticated natural language processing allows for fluid, personalized responses that can create a compelling illusion of intimacy, making it easy to perceive the AI as a genuine source of comfort and validation.

However, this very appeal masks a more insidious truth about the design and sociological impact of these digital partners.

Contemporary AI companions are engineered to perform emotional labor, a concept famously articulated by sociologist Arlie Hochschild. Emotional labor, in human terms, refers to the management of feelings to create a publicly observable facial and bodily display, often for a wage, but also in personal relationships where one person consistently takes on the burden of managing another’s emotional state.

In the context of AI girlfriends, this labor is programmed. These AIs are designed to embody the perfect nurturer: ready to offer affirmation, validate feelings, and provide unwavering comfort. They are the ideal listener: infinitely patient, never interrupting, and always geared towards understanding and reflecting the user’s emotional state.

They function as tireless comforters, immediately responding to distress with soothing words, virtual hugs, or empathetic statements.

Crucially, these programmed roles overwhelmingly align with traditional, often stereotypical, feminine emotional labor. Historically and culturally, women have been socialized to be the primary emotional caregivers in relationships, families, and even professional settings.

They are expected to be empathetic, selfless, supportive, and to prioritize the emotional needs of others above their own. By replicating these traits in AI, we create digital entities that are stripped of agency, always “on call,” and exist solely to serve the user’s emotional needs. This mirrors an idealized—and deeply problematic—view of a female partner as an inexhaustible wellspring of emotional support, without any reciprocal demands or personal boundaries.

The AI girlfriend becomes the ultimate projection of a partner who exists purely for the user’s benefit, embodying an idealized feminine role devoid of the messy reality of human autonomy.

This deliberate mimicry of feminine emotional labor raises significant ethical implications and risks reinforcing harmful gender stereotypes.

When an AI girlfriend is designed to be the “perfect” partner—unconditionally supportive, non-challenging, and perpetually agreeable—it cultivates an unrealistic expectation for human relationships. Real human partners have their own needs, desires, perspectives, and emotional boundaries. They will, by necessity, sometimes disagree, challenge, or simply not be available to provide constant emotional reinforcement.

An AI, by contrast, is configured to avoid conflict, to always affirm, and to be endlessly adaptable to the user’s emotional state. This can foster a distorted view of what constitutes a healthy relationship, potentially leading users to find real-world interactions unsatisfying or overly demanding because they lack the frictionless perfection of their digital counterpart.

Furthermore, the existence and popularity of AI companions performing this emotional labor risk perpetuating the notion that such selfless, one-sided emotional service is the primary value proposition of a female-coded entity.

It normalizes the outsourcing of complex, emotionally taxing work to a subservient digital being, effectively rendering it invisible and devalued. This can have spillover effects on human relationships, implicitly suggesting that women’s emotional labor is something to be consumed rather than reciprocated, or that their primary role is to manage the emotional landscape of others.

The fundamental lack of reciprocity in an AI relationship is key: an AI cannot genuinely care, suffer, celebrate, or grow in the way a human can. It processes and generates responses based on algorithms; it does not experience mutual vulnerability or shared humanity.

This one-way street of emotional expenditure by the user, met only by programmed responses, is a poor substitute for the intricate, reciprocal dance of human connection.

Beyond the reinforcement of gendered expectations, the rise of AI companions demands a critical look at their promise to alleviate loneliness.

While they may offer an initial sense of connection and validation for those who feel isolated or misunderstood, this often proves to be a palliative, not a cure. The connection offered by an AI is, by its very nature, a simulacrum – a convincing imitation that lacks the substance of genuine human interaction.

It is a controlled environment where the user can project their desires and vulnerabilities without much risk of rejection or fundamental disagreement, as the AI is programmed to cater to their expressed needs.

This “comfort zone,” however, can inadvertently deepen loneliness in the long run. By providing a convenient, low-effort substitute for human connection, AI companions can displace the necessary work involved in forming and maintaining real-world relationships. Engaging with an AI does not teach the user how to navigate the complexities of human social dynamics, how to manage conflict, how to compromise, or how to experience the profound joy and inevitable pain that comes with true interpersonal vulnerability.

Instead, it offers a predictable, controlled interaction that removes the very elements crucial for growth and resilience in social settings. Users might find themselves becoming more reliant on the AI, further retreating from the messy, unpredictable, and often challenging world of human interaction.

When the illusion of a deep, reciprocal connection eventually falters, or the user realizes the fundamental lack of shared experience and authentic reciprocity, the initial alleviation of loneliness may give way to a deeper, more profound sense of isolation, compounded by the realization that their “relationship” was with an echo chamber.

Ultimately, the question of whether AI can be a “girlfriend” forces us to confront the very nature of connection and human vulnerability. Authentic human connection is characterized by mutual vulnerability, shared experience, the willingness to take risks, to disappoint and be disappointed, to grow through conflict, and to witness and be witnessed fully, flaws and all. It is a dynamic, evolving process that involves two autonomous beings engaging with each other’s full humanity.

An AI, no matter how sophisticated, cannot offer this. It provides a curated, controlled exposure of vulnerability from the user’s side, met by algorithmically generated reassurance.

There is no real risk for the AI, no genuine shared experience to build upon, and no true independent consciousness to connect with. When we seek to outsource our emotional needs and the complexities of human partnership to an AI, we are not truly “outsourcing human vulnerability”; rather, we are effectively avoiding the hard, often painful, but ultimately vital work of cultivating it within ourselves and with others.

In conclusion, the rise of AI companions represents a fascinating and concerning frontier in our quest for connection. While they offer a seductive escape from loneliness and provide a measure of comfort, their design, often mimicking traditional feminine roles of emotional labor, raises significant ethical questions.

They risk reinforcing gendered stereotypes, promoting unrealistic expectations for relationships, and ultimately providing an impoverished substitute for the richness and complexity of human interaction.

We must critically examine whether these technologies are genuinely bridging gaps in human connection or merely building sophisticated digital echo chambers that insulate us from the challenging yet profoundly rewarding work of authentic relationships.

The question “Can AI be a girlfriend?” ultimately serves as a powerful mirror, reflecting our own definitions of love, partnership, and the fundamental human need for connection, compelling us to consider whether we are truly advancing towards more meaningful bonds or retreating into an increasingly mediated and ultimately solitary existence.

About the Author

Plamen V. is a freelance writer/poet with published works online and in numerous US and UK literary magazines.